The Localhost Lie and the Edge Reality

We’ve all been there: you build a sleek new application, benchmarks are screaming on your local NVMe drive, and the developer experience is pure bliss because you’re using SQLite. But the moment you talk about production, the 'adults' in the room insist on a Postgres cluster. They cite high availability, global distribution, and the inherent 'limitations' of a single-file database. For years, they were right. If your server went down, your data went with it, or at the very least, your latency-sensitive users in Tokyo were suffering through 300ms round-trips to your US-East-1 database.

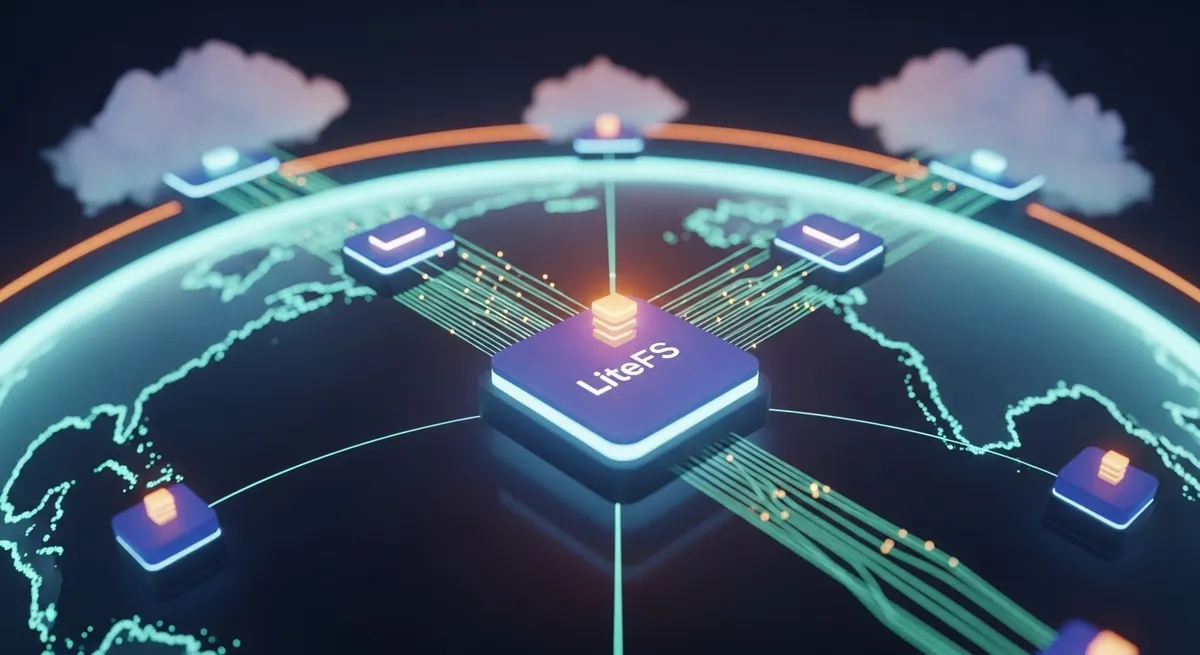

Then came LiteFS. It changed the narrative from 'SQLite is for small apps' to 'SQLite is for distributed systems.' By treating the database as a replicated file system, LiteFS allows us to keep the simplicity of SQLite while gaining the resilience of a distributed cluster. But let’s be clear: if you aren't thinking about how your data moves across the wire, your high-availability strategy is just an illusion. Relying on a single node in a single region is a recipe for a 3 AM page you won't forget.

The Engineering Magic: How LiteFS Actually Works

Unlike traditional database replication that sends SQL statements or WAL frames over the network, LiteFS operates at the file system level using FUSE (Filesystem in Userspace). It essentially sits between your application and the disk. When SQLite writes a page to the database, LiteFS intercepts that change and bundles it into a custom LTX (Lite Transaction) file. This isn't just a blind copy; it’s a checksummed, compressed package of exactly what changed.

These LTX files are then broadcast to replica nodes across the globe. On platforms like Fly.io, this means your application in Sydney has a byte-for-byte copy of the database living on its own local disk, updated in near real-time. This architecture transforms your p95 read latencies from 150ms cross-continent hops to sub-1ms local lookups. As Kent C. Dodds noted during his migration, this shift eliminates the 'N+1 query problem' because the cost of a query drops to almost zero when there is no network involved.

Leases, Primaries, and the Single-Writer Constraint

We have to talk about the 'Single Writer' elephant in the room. In a LiteFS cluster, only one node is the 'Primary' at any given time. This is managed through distributed leases (typically using Consul). If the primary node vanishes, the remaining nodes hold an election and a new leader emerges. It’s elegant, but it comes with a physical ceiling. Because LiteFS uses FUSE, there is a context-switching overhead that typically caps write throughput at around 100 transactions per second. For 95% of SaaS applications, this is plenty. For a high-frequency trading platform? Stick to Postgres.

Solving the 'Ephemeral' Problem on Fly.io

Modern cloud environments like Fly.io Machines are ephemeral. They restart, they move, and they scale. In this world, a local SQLite file is a liability unless it’s replicated. LiteFS makes the database 'liquid,' allowing it to flow between these ephemeral nodes. When a new Machine spins up, it doesn't start with an empty disk; it pulls the latest LTX files and reconstructs the state of the world in seconds.

The Post-LiteFS Cloud Landscape

It’s worth noting a major shift in the ecosystem. As of October 15, 2024, the managed 'LiteFS Cloud' backup service was sunsetted. This move signaled a return to a more hands-on approach for disaster recovery. While LiteFS handles replication (keeping nodes in sync), it is not a backup solution. If you accidentally run a DELETE FROM users without a WHERE clause, LiteFS will faithfully replicate that mistake to every node in milliseconds. This is why savvy architects combine LiteFS for live replication with tools like Litestream for continuous streaming to S3-compatible storage. It’s the ultimate belt-and-suspenders approach to edge data consistency.

When to Choose LiteFS Over Traditional RDBMS

The decision to move to a distributed SQLite architecture shouldn't be based on hype. It’s a trade-off. You are choosing operational simplicity and massive read performance over complex write-scaling. Here is when LiteFS wins:

- Read-Heavy Workloads: If your app performs 10-100 reads for every 1 write, the local-first performance of LiteFS is unbeatable.

- Global User Bases: When you need to provide a snappy experience to users regardless of their geography without managing a massive Postgres Aurora Global cluster.

- DevOps Minimalism: If you want to stop managing connection pools, PGBouncer, and complex backup manifests. With LiteFS, the database is just a file that follows your code.

The FUSE vs. VFS Debate

The current implementation of LiteFS relies on FUSE, which can occasionally introduce latency spikes during heavy I/O. The community is eagerly looking toward a future 'LiteFS VFS' (Virtual File System) implementation, which would plug directly into SQLite’s internal OS interface. This would bypass the kernel context switching of FUSE, potentially doubling the write ceiling and making SQLite replication even more competitive with native C-based drivers used by competitors like Turso or Cloudflare D1. As highlighted in the analysis of SQLite eating the cloud in 2025, the trend is moving toward these native integrations to squeeze every microsecond of performance out of the edge.

Final Thoughts: Stop Over-Engineering Your Data Layer

The 'High-Availability Illusion' is the belief that you need a complex, expensive distributed database to be 'production-ready.' For most of us, that complexity is a distraction from building features. LiteFS provides a middle ground that was previously impossible: the reliability of a distributed system with the simple, boring, and predictable nature of SQLite. By co-locating your data with your compute on Fly.io, you aren't just making your app faster—you're making it more resilient to the inevitable failures of the modern web.

Ready to kill your database latency? Start by auditing your read-to-write ratio. If you find yourself in the majority of developers who are over-paying for Postgres features they don't use, it might be time to bring your data to the edge with LiteFS. Your users—and your on-call rotation—will thank you.