The Logging Debt Trap: Why Your Ground Truth is Costing You a Fortune

Stop me if you’ve heard this one: a P0 incident hits at 3 AM. Your SRE team jumps into a Slack war room, and the first thing everyone does is fire up a Kibana dashboard. They spend the next forty minutes wrestling with regex, fighting through millions of lines of unformatted text, and praying that the specific error they need wasn't dropped by a buffer overflow. By the time someone finds the right log entry, the customer churn has already peaked.

We have been conditioned to believe that logs are the ultimate 'ground truth.' But in the world of distributed systems, this log-first obsession is a hallucination. It’s a relic of the monolithic era that is now eating up to 30% of engineering budgets in ingestion and indexing costs alone. Transitioning to a modern OpenTelemetry observability correlation strategy isn't just about 'better tools'—it's about surviving the data deluge without going bankrupt.

The 'Three Pillars' Cliché is Dead

For years, we’ve been told observability is a tripod of logs, metrics, and traces. The problem? Most teams treat them as isolated silos. You see a spike in a Grafana metric, then you manually copy a timestamp, head over to your log aggregator, and hope the clocks are synchronized. This context switching is the silent killer of mean-time-to-resolution (MTTR).

According to The New Stack, the shift from DIY ELK stacks to unified pipelines is driven by the sheer operational nightmare of scaling row-based log storage. When you prioritize logs first, you are essentially paying to index 'everything is fine' messages just to find the 0.1% that actually matter. It is a massive waste of high-performance compute.

Mastering the Trace-to-Metric Correlation with Exemplars

The real magic happens when you stop looking at metrics and traces as different things and start seeing them as different views of the same telemetry. This is where OpenTelemetry observability correlation truly shines through a feature called Exemplars.

How Exemplars Solve the Cardinality Problem

Normally, adding high-cardinality data (like a UserID or RequestID) to metrics makes your time-series database explode. It becomes too expensive to query. Exemplars solve this by allowing you to attach a specific Trace ID to a metric data point without indexing it as a dimension.

When you see a P99 latency spike on a Prometheus graph, an Exemplar provides a direct link—a 'bridge'—to the specific trace that caused that spike. You aren't searching anymore; you are clicking. This sub-second transition from 'something is wrong' (metric) to 'here is exactly why' (trace) is the hallmark of a mature OpenTelemetry observability correlation setup.

Implementing Semantic Conventions

The glue that holds this together is the OpenTelemetry Semantic Conventions. By ensuring that every signal—whether it’s a log, a metric, or a span—shares the exact same service.name, host.id, and k8s.pod.name, you eliminate the guesswork. When your collector configuration is standardized, your backend can automatically correlate signals. You no longer need to wonder if 'api-gateway-v2' in your logs is the same as 'api_gw_2' in your metrics.

The Economics of First-Mile Processing

One of the biggest hurdles for SREs is the fear of sampling. We’ve been told that if we don't keep 100% of our logs, we’ll miss the 'one in a million' error. But as Honeycomb argues, switching interfaces between logs and traces during an investigation is what actually leads to missed insights.

By using the OpenTelemetry Collector, you can implement 'tail-based sampling.' Instead of keeping every boring 200 OK log, you can configure your pipeline to keep 100% of traces that end in an error or exceed a latency threshold, while sampling only 1% of successful requests. This 'Log-derived metrics' approach allows you to maintain high-level visibility while reducing your storage footprint by up to 90%.

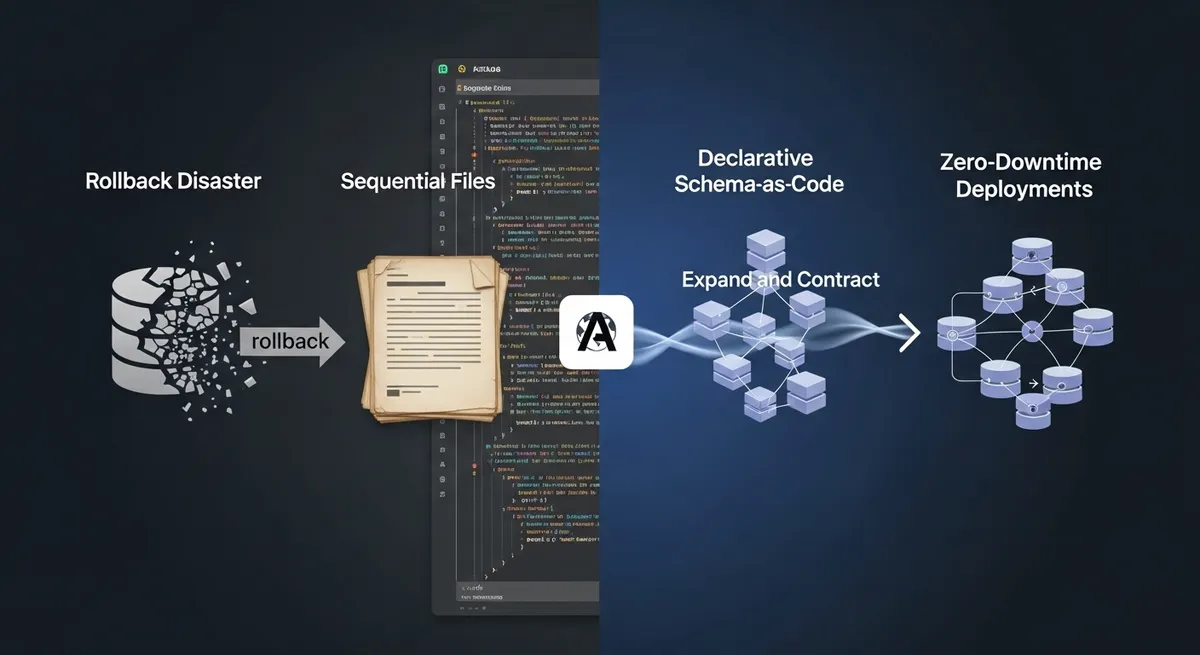

Tactical Steps for Your OTel Collector Configuration

Moving to a correlation-first strategy requires a shift in how you deploy your otel collector configuration. Here is the blueprint for a modern pipeline:

- Attribute Mapping: Use the

resourcedetectionprocessor to automatically grab cloud provider metadata. This ensures every metric is tagged with the right environment context. - Span-to-Metric Generation: Don't wait for your app to emit metrics. Use the

spanmetricsprocessor in the collector to generate request counts and latency distributions directly from your traces. - Filtering at the Edge: Drop verbose debug logs at the collector level before they ever hit your expensive SaaS backend. If you need them later, use a 'telemetry pipeline' to route raw logs to a cheap S3 bucket while sending structured data to your observability platform.

Overcoming the 'Log-First' Cultural Bias

The hardest part of this transition isn't the YAML; it's the people. Veteran engineers often view raw logs as the only source of truth. You have to demonstrate that metrics vs logs performance isn't just about speed—it's about cognitive load. A developer who can navigate from a metric spike to a broken line of code in three clicks will always outperform one who is an 'expert' at Grep.

OpenTelemetry has even recently added Continuous Profiling as the 'fourth pillar,' giving us code-level execution insights. This makes the case for a unified telemetry pipeline even stronger. When you can see that a specific function call is causing a CPU spike directly from your dashboard, the old way of 'logging everything and sorting it out later' starts to look like a relic of the past.

Precision Over Volume

Effective observability prioritizes 'Time-to-Understanding' over the 'Volume of Data.' By mastering OpenTelemetry observability correlation, you move away from the expensive hallucination that more logs equal more insight. Start by implementing Exemplars, standardize your attributes using Semantic Conventions, and embrace tail-based sampling in your collector. Your budget—and your on-call engineers—will thank you.

Ready to stop digging through the noise? Start by auditing your current ingestion costs and identify where a single trace could replace a thousand logs. It’s time to build a strategy that scales with your code, not your credit card limit.