The RAG Sprawl is Killing Your Productivity

I remember the first time I got a basic Retrieval-Augmented Generation (RAG) pipeline working. It felt like magic. I threw a few PDFs into a vector database, hooked up a similarity search, and suddenly my LLM was talking about my private data. But as anyone who has moved past the proof-of-concept stage knows, the honeymoon phase ends quickly. Soon, you are drowning in chunking strategies, overlap percentages, and the eternal nightmare of recursive character splitting. You aren't building a product anymore; you are a glorified plumber for text chunks.

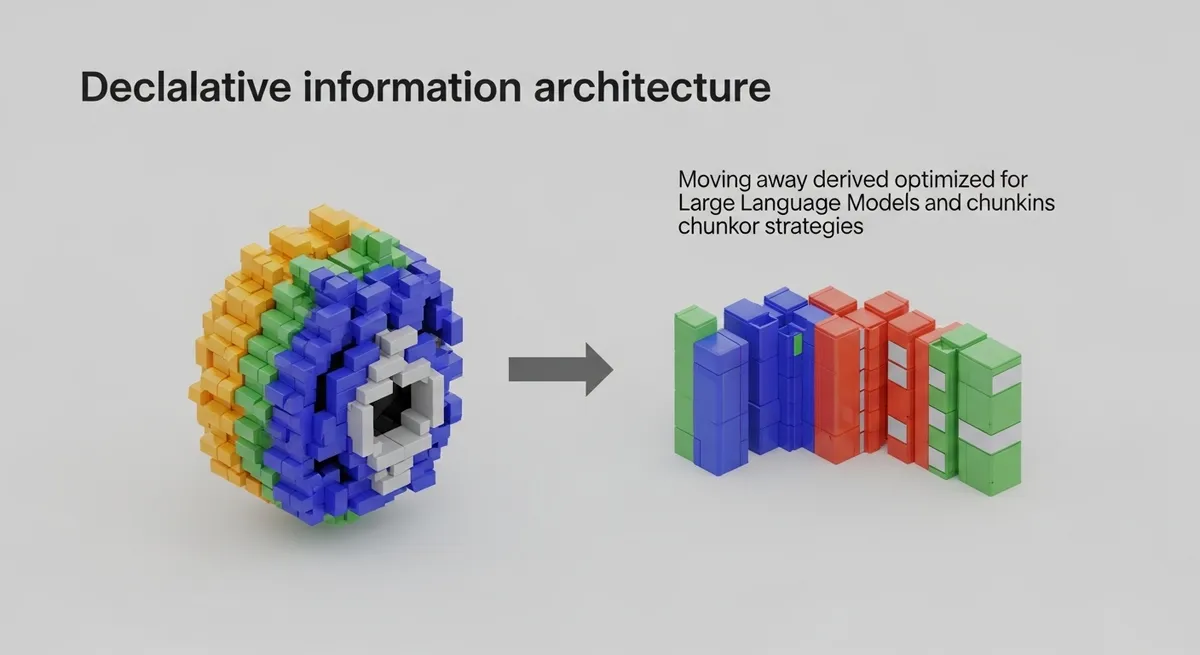

The industry is hitting a wall with manual RAG. We have spent the last eighteen months obsessing over embeddings and vector distances, yet our LLMs still hallucinate because they lack the structural context of the data. This is where declarative information architecture comes in. It is the shift from 'how' we store data to 'what' the data actually represents, and it is making the DIY RAG pipeline look like a relic of a bygone era.

The Problem with Manual RAG Pipelines

In a traditional RAG setup, you are responsible for the entire ETL (Extract, Transform, Load) process. You decide how to slice the data, which embedding model to use, and how to manage the metadata. This imperative approach is fragile. If your data schema changes or you need to switch from a simple vector search to a knowledge graph, you are essentially rewriting your entire ingestion engine.

Furthermore, vector search alone is fundamentally limited. It is great for finding 'similar' things, but terrible at understanding relationships. If I ask a vector-based RAG system about the relationship between two entities mentioned five pages apart, it will likely fail. This lack of relational awareness is why so many enterprise AI projects stall. We need a way to move beyond flat vectors and toward graph data modeling for LLMs that happens automatically.

Enter Cognee: The Shift to Declarative Information Architecture

The cognee framework is at the forefront of this revolution. Instead of you telling the system how to chunk and store data, you define the desired state of your information. Cognee takes over the heavy lifting of transforming unstructured data into structured, graph-parallel formats. This is the essence of declarative information architecture: you describe the domain, and the framework ensures the data is mapped into a format the LLM can actually reason across.

Why Graph Data Modeling Changes Everything

When you use a framework like Cognee, you aren't just indexing text; you are building a cognitive map. It uses LLMs to extract entities and relationships during the ingestion phase, creating a graph that sits alongside your vectors. According to research on GraphRAG techniques, combining structured graph data with unstructured text significantly improves the accuracy of multi-hop reasoning tasks.

By adopting a declarative approach, you gain several immediate advantages:

- Automatic Schema Discovery: You don't have to pre-define every single node type. The framework can infer the structure based on the data provided.

- Relationship Persistence: The connection between 'Founder' and 'Company' isn't lost in a random chunk; it is a first-class citizen in your database.

- RAG Pipeline Optimization: Because the data is structured, the retrieval phase can use graph traversal to find context that a simple cosine similarity search would miss.

Moving from Plumber to Architect

As developers, we have a habit of over-engineering the wrong things. We spend weeks tuning our RAG pipeline optimization parameters when we should be focused on the user experience. Cognee allows us to step back. By using a declarative layer, you treat your data as a living knowledge base rather than a static pile of tokens.

Think of it like the transition from manual infrastructure management to Terraform. You don't manually click around a console to spin up servers; you write a configuration file that describes the infrastructure you want. Declarative information architecture does the same for your AI's brain. You provide the raw material and the constraints, and the system builds the most efficient path for retrieval.

The Reality of Implementation

Implementing this isn't just about adding another library; it's about changing your mental model. You have to stop thinking in terms of 'top-k results' and start thinking in terms of 'semantic density.' How much information can the LLM extract from this specific node? Frameworks like Cognee help by automating the graph data modeling for LLMs, ensuring that every piece of data is contextualized before it ever hits the prompt window. This significantly reduces the noise-to-signal ratio that plagues traditional RAG systems.

The End of the DIY Era

The argument for building your own RAG pipeline is getting weaker by the day. Unless you are doing something so specialized that existing frameworks can't touch it, you are likely wasting cycles on solved problems. The industry is moving toward standardized, declarative patterns because they are more maintainable, more scalable, and frankly, more intelligent. As noted in discussions surrounding property graph indexes, the future of LLM data usage is deeply interconnected, not siloed in flat files.

If you want to build AI applications that actually understand the data they are processing, you need to embrace declarative information architecture. Stop worrying about chunk sizes and start focusing on the relationships that define your domain. The tools are here, the patterns are established, and the benefits in terms of accuracy and development speed are too large to ignore.

Take the Next Step

Ready to stop plumbing and start building? I highly recommend checking out the Cognee repository and experimenting with their approach to graph-based data ingestion. Move your project toward a declarative model and see how much more coherent your LLM's responses become. The era of manual RAG is over—don't get left behind in the vector graveyard. What has been your biggest headache with manual RAG? Let’s talk about it in the comments below.