The Multi-Core Lie and the Redis Wall

We’ve all been there: your application is booming, traffic is spiking, and suddenly your perfectly tuned Redis instance starts gasping for air. You do what any sensible engineer would do—you throw more CPU and RAM at the problem. But then, the cold reality of Amdahl's Law hits you. Despite running on a beastly 64-core AWS instance, your throughput barely budges because Redis is fundamentally tethered to a single-threaded event loop. You’re paying for a Ferrari but driving it in a school zone.

To get around this, we usually resort to Redis Clustering. We split our data into 16,384 hash slots, manage gossip protocols, and pray that our rebalancing doesn't trigger a latency spike that takes down the checkout service. It is a massive operational tax paid solely to bypass a legacy architectural limitation. This is where DragonflyDB performance vs Redis becomes a critical conversation for anyone tired of 'cluster fatigue'.

The Shared-Nothing Revelation

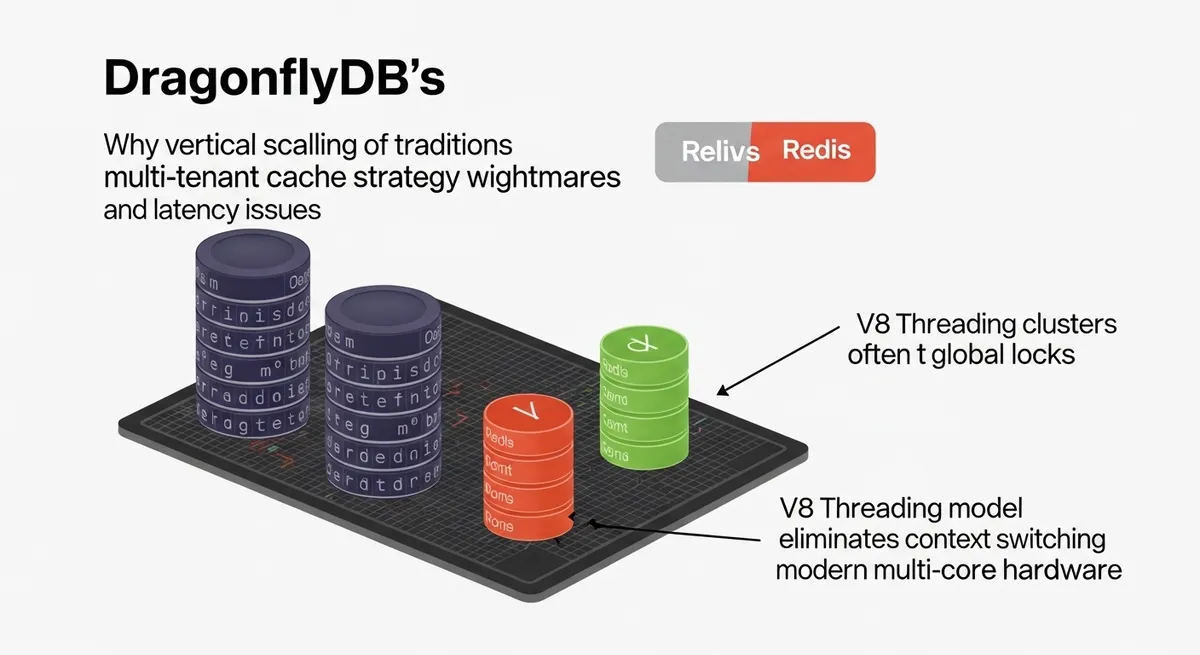

DragonflyDB doesn't just try to make Redis faster; it reimagines how an in-memory data store should behave on modern hardware. At its core is a shared-nothing architecture. In a traditional multi-threaded system, threads constantly fight over shared resources, protected by expensive mutexes and global locks. Dragonfly takes the opposite approach.

Inspired by the V8 engine’s threading model and the Seastar framework, Dragonfly partitions its keyspace into independent shards. Each CPU core owns a specific slice of the data and runs its own execution loop. There is no contention. There is no global lock. When a request comes in, it is routed to the specific thread owning that data. By treating a multi-core server as an orchestrated swarm of independent workers, Dragonfly eliminates the context-switching overhead that kills performance in traditional systems.

Why Dragonfly Scales Where Redis Stalls

1. Vertical Scaling Without the Tears

In the DragonflyDB performance vs Redis debate, the most jarring statistic is the throughput. While Redis requires complex horizontal clustering to utilize multi-core machines, Dragonfly scales vertically by natively utilizing every available core in a single process. Benchmarks have shown Dragonfly hitting upwards of 3.8 million to 4 million operations per second on a single c6gn.16xlarge instance. To achieve that with Redis, you’d be managing dozens of nodes, complex proxy layers, and significant overhead.

2. The Dashtable and Memory Efficiency

Memory is the most expensive part of your cache stack. Redis uses traditional hash tables that can be incredibly wasteful. Dragonfly introduces a novel data structure called the 'Dashtable'. According to the Dragonfly engineering team, this structure reduces memory overhead by 30-60%. More importantly, Dragonfly avoids the dreaded fork() system call for snapshots. While Redis can require 2x the memory during a BGSAVE to account for copy-on-write overhead, Dragonfly uses a fork-less, asynchronous snapshotting mechanism that keeps memory usage stable and predictable.

3. Multi-Tenant Optimization

If you're building a SaaS platform where thousands of customers share the same infrastructure, cache multi-tenancy optimization is your primary headache. In a single-threaded Redis model, one 'heavy' tenant running an O(N) command like KEYS * can head-of-line block every other user on the system. Because Dragonfly distributes the workload across its shared-nothing shards, the blast radius of a heavy query is significantly reduced, ensuring that your tail latency (P99) stays low even under extreme aggregate volume.

The Pragmatic Engineer’s Reality Check

Is Dragonfly a perfect 'Redis killer'? Not quite—at least not for everyone yet. Redis has a 15-year head start. If your stack relies heavily on specialized modules like RedisSearch or RedisJSON, you'll find that Dragonfly is still in the process of reaching full feature parity. Furthermore, for a small side project with 100 users, you won't notice the difference. The 25x throughput advantage of Dragonfly only manifests when you are pushing high concurrency and saturating modern NICs.

However, for infrastructure architects, the high-performance in-memory data store landscape has shifted. Dragonfly is a drop-in replacement; it speaks the Redis and Memcached protocols. You can point your existing Go or Python clients at a Dragonfly endpoint and, in most cases, it just works. You get the simplicity of a single instance with the power of a massive cluster.

The Verdict: Simplicity is a Feature

The complexity of managing Redis clusters is an 'operational debt' we’ve accepted for too long. By moving the complexity into the engine’s architecture rather than the user’s infrastructure, Dragonfly allows us to return to a world where we scale by changing an instance type, not by rewriting our sharding logic. As detailed in recent performance deep dives, the ability to maintain sub-millisecond latency while processing millions of requests per second on a single node is no longer a pipe dream.

If your current cache strategy feels like a house of cards held together by gossip protocols and manual rebalancing, it’s time to look at the shared-nothing alternative. Start by auditing your current Redis CPU utilization. If you’re seeing one core pegged at 100% while the other 15 sit idle, you’re the perfect candidate for a migration.

Have you experimented with DragonflyDB in production yet, or are you sticking with the tried-and-true Redis Cluster? Let's discuss the trade-offs in the comments below.