Edge Computing vs. Cloud: Navigating the Hybrid Future

In a world driven by real-time decisions, where data is processed matters more than ever.

For over a decade, cloud computing has been the backbone of scalable applications. From startups to enterprises, the cloud solved problems of storage, compute, and global access.

But as we enter the era of IoT, AI, robotics, and real-time systems, a critical limitation has emerged:

Latency is the new bottleneck.

Sending data to centralized cloud servers introduces delays that are unacceptable for time-sensitive applications.

The Latency Problem

Even small delays (100–200ms) can be critical in:

- Autonomous vehicles

- Industrial robotics

- AR/VR systems

- Real-time healthcare monitoring

Edge Computing Solution:

Process data closer to the source — reducing latency to <10ms.

Process data closer to the source — reducing latency to <10ms.

Edge vs Cloud: Key Differences

| Factor | Edge Computing | Cloud Computing |

|---|---|---|

| Latency | Ultra-low (<10ms) | Moderate (50–200ms) |

| Processing | Near-device | Centralized |

| Scalability | Limited | High |

| Bandwidth | Reduced usage | Higher usage |

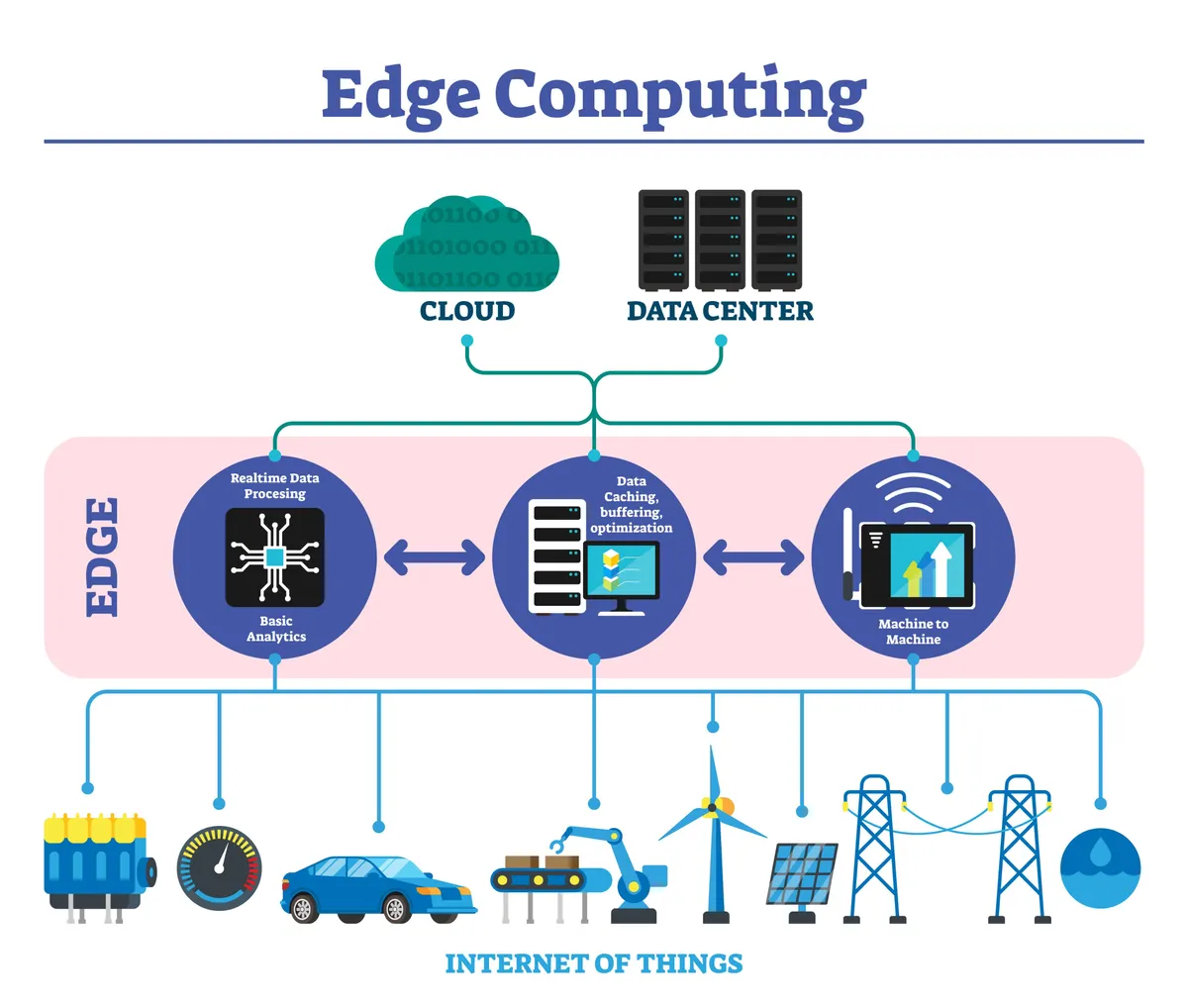

The Hybrid Architecture

The future lies in combining both:

- Edge: Real-time actions, filtering, privacy

- Cloud: Storage, analytics, AI training

Workflow:

Edge handles instant decisions → Cloud handles deep intelligence → Edge executes refined outcomes.

Edge handles instant decisions → Cloud handles deep intelligence → Edge executes refined outcomes.

Security & Privacy

- Local processing reduces exposure

- Less data transfer over internet

- Better compliance with regulations

Key Insight:

Keeping data closer makes systems faster, safer, and more reliable.

Keeping data closer makes systems faster, safer, and more reliable.

Final Thought

"The future of computing is distributed — intelligently balancing edge speed with cloud power."

Mastering this balance will define the next generation of scalable systems.