The Infrastructure Middleman is Dead

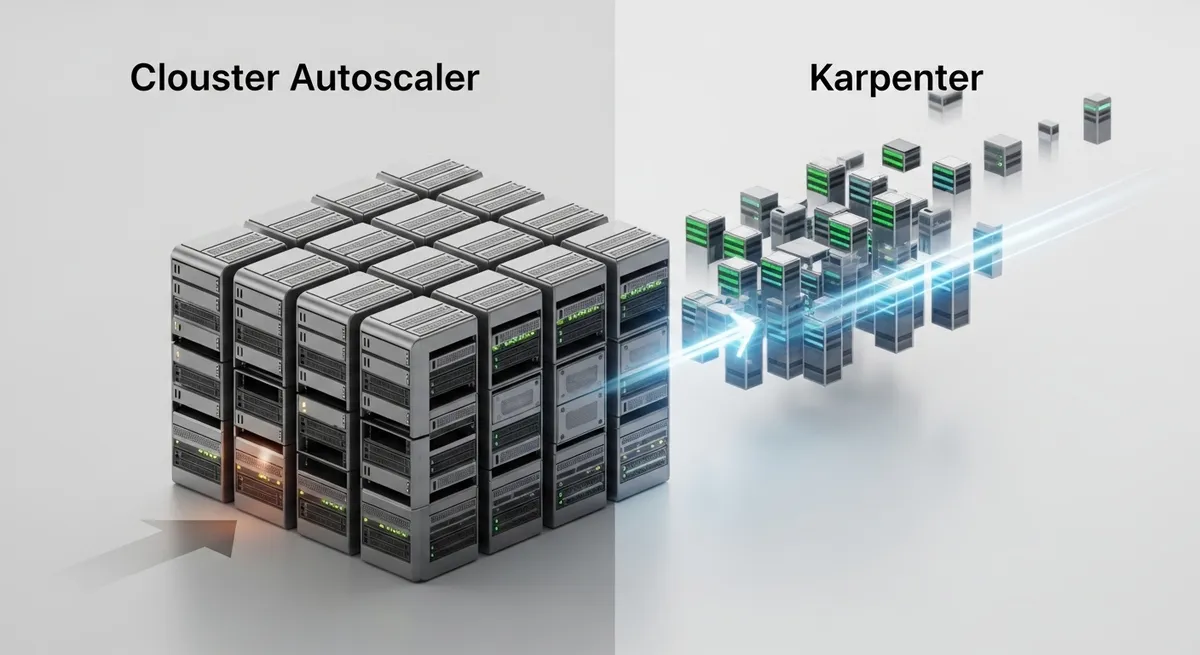

Stop me if you've heard this one before: your Kubernetes cluster is sitting at 25% CPU utilization, yet you can’t schedule a single new pod because your Auto Scaling Group is locked into a specific instance type that’s currently out of stock. You spend your weekends tuning instance groups, guessing whether an m5.large or a c5.xlarge is the better 'generic' fit for your developers' unpredictable workloads. We’ve been tolerating this friction for years, but it’s time to call it what it is: a legacy mindset. The debate of Karpenter vs Cluster Autoscaler isn't just about speed; it's a fundamental shift from 'Infrastructure-First' to 'Workload-First' compute.

The Legacy Bottleneck: Cluster Autoscaler

For a long time, the Kubernetes Cluster Autoscaler (CA) was the only game in town. It works by monitoring for pending pods and then reaching out to your cloud provider to increment the 'desired capacity' of a pre-defined node group. While reliable, this approach is inherently reactive and rigid. CA doesn't actually know how to provision a node; it just asks an AWS Auto Scaling Group (ASG) to do it. This 'middleman' architecture introduces significant overhead. It’s common to see nodes take 3 to 5 minutes to join a cluster, during which your critical application pods sit in a Pending state.

Moreover, CA forces you into the 'Static Node Group' trap. If you need GPUs for an AI task, you build a GPU node group. Need high-memory for a database? That’s another node group. Pretty soon, your cluster configuration is a sprawling mess of YAML, and you're paying for 'warm' idle instances in each group just to avoid slow cold starts.

Enter Karpenter: Just-in-Time Infrastructure

Karpenter flips the script. Instead of managing groups of identical instances, Karpenter looks at the specific resource requests, constraints, and tolerations of your pending pods and talks directly to the EC2 Fleet API. It asks, 'What is the cheapest, most efficient instance available right now that fits this exact pod?' and then provisions it immediately.

This is what we call Just-in-Time infrastructure. In a Karpenter-managed environment, the concept of a 'node group' effectively disappears. One single NodePool can manage a heterogeneous mix of Spot and On-Demand instances, spanning multiple architectures (ARM64 and AMD64) and various instance families. According to Karpenter's official documentation, the transition to the v1.0 API has stabilized this model, replacing the older 'Provisioner' concept with a more robust NodePool and NodeClass system that handles production-grade traffic with ease.

Why the Industry is Migrating

1. Dramatic Cost Reduction

The financial impact of Karpenter vs Cluster Autoscaler is often the biggest driver for adoption. Because Karpenter can aggressively 'bin-pack'—moving pods around to ensure nodes are fully utilized—organizations are seeing massive savings. A recent report by InfoQ highlighted cases where companies achieved 70% cost reductions by leveraging Karpenter’s ability to mix ARM64 instances and Spot capacity dynamically. While CA only removes nodes when they are completely empty, Karpenter actively seeks out consolidation. If it finds that three underutilized nodes can be replaced by one cheaper instance, it handles the migration automatically.

2. Scaling in Seconds, Not Minutes

In modern DevOps, four minutes is an eternity. Karpenter typically brings nodes online in 45 to 60 seconds. By bypassing the abstraction layers of ASGs and interacting directly with the cloud provider’s capacity APIs, Karpenter significantly reduces the scheduling latency. This is a game-changer for bursty workloads, such as CI/CD runners or real-time data processing, where the ability to scale up and down rapidly directly affects developer productivity and user experience.

3. Native Support for AI and GPU Workloads

If you're running AI/ML training or inference, AWS EKS node provisioning with Karpenter is practically a requirement. Managing NVIDIA H100s or AWS Trainium instances with static node groups is a nightmare; they are expensive to keep idle and prone to stock outages. Karpenter can be configured to hunt for available GPU capacity across multiple instance types, ensuring your training jobs start as soon as hardware is available, without requiring a human to manually adjust scaling groups.

The Nuance: Is It Always Better?

I’m an advocate for Karpenter, but I’m not blind to its complexities. If you are operating in a multi-cloud environment where you need identical tooling across AWS, GCP, and Azure, Cluster Autoscaler remains the more 'stable' choice for cross-provider parity. While Karpenter has made strides in supporting other clouds, it is undeniably an AWS-first tool.

Furthermore, Karpenter is aggressive. If you don't have well-defined Pod Disruption Budgets (PDBs), Karpenter’s consolidation logic might terminate nodes while your application is in a sensitive state. It requires a higher level of 'Kubernetes maturity' from your engineering team. You can't just set it and forget it; you need to ensure your applications are truly cloud-native and resilient to frequent restarts.

Conclusion: The End of the Static Mindset

The era of treating Kubernetes nodes like fixed assets is over. The Karpenter vs Cluster Autoscaler debate has a clear winner for teams operating on AWS: Karpenter. By embracing Just-in-Time infrastructure, you stop paying for what you thought you needed and start paying for exactly what your pods are using. As demonstrated by real-world case studies showing upwards of 57% cost reductions, the efficiency gains are too large to ignore.

If you're still managing dozens of Managed Node Groups, your first task for next sprint should be a Karpenter PoC. Start by migrating your non-production workloads to a single NodePool and watch how much waste you can trim when your infrastructure finally starts listening to your code.