The End of the Middleman?

I remember the first time I built a real-time dashboard. I spent three weeks perfecting a Node.js middleware that did nothing but fetch 100MB of JSON from a database, aggregate it into three numbers, and ship it to the frontend. It felt sophisticated until I realized the user was waiting four seconds for a spinner just to see a single bar chart update. We've been taught that 'data belongs on the server,' but that paradigm is crumbling. Today, we are seeing a massive shift: the rise of the in-browser database engine that makes our old REST APIs look like dial-up.

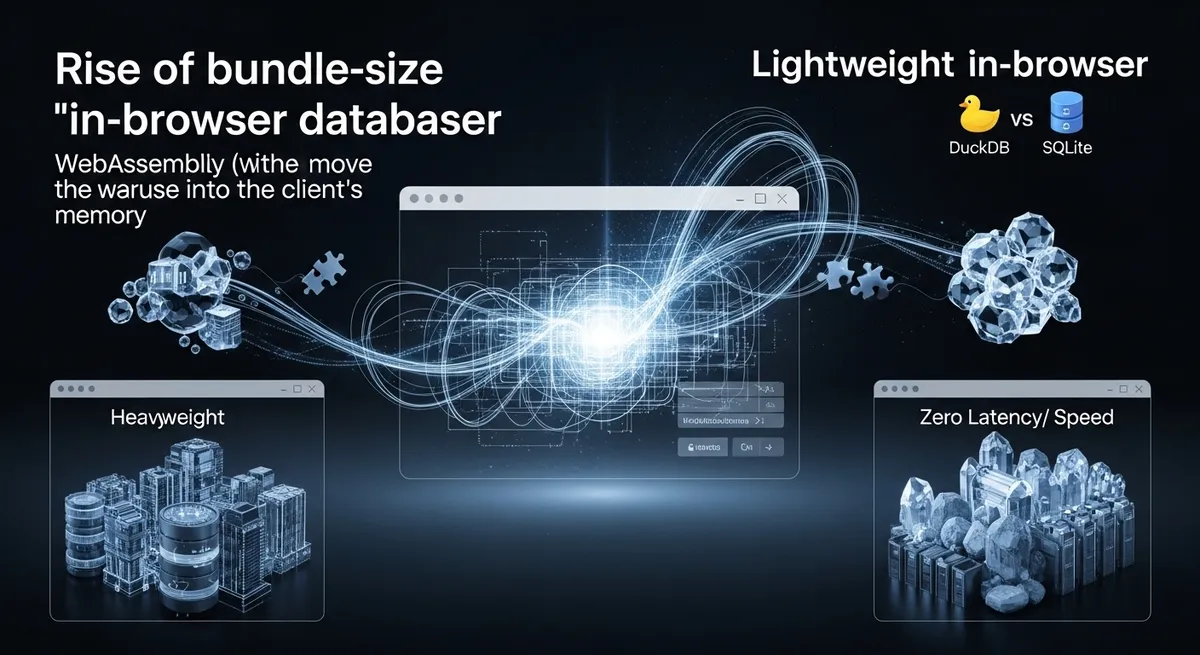

While everyone is talking about 'Local-First' as a trend, the real technical breakthrough isn't just about offline sync; it’s about the raw power of WebAssembly. By pitting DuckDB-Wasm vs SQLite Wasm, frontend architects are discovering they can move the entire Data Warehouse into the client's memory, querying millions of rows with sub-second latency without a single round-trip to a backend server.

OLAP vs OLTP: Choosing Your In-Browser Weapon

Before we go further, we have to address the architectural split. You wouldn't use a screwdriver to drive a nail, and you shouldn't use the wrong Wasm engine for your data. The debate over DuckDB-Wasm vs SQLite Wasm usually boils down to how your data is structured and how you intend to read it.

SQLite Wasm: The King of Transactions (OLTP)

SQLite is the gold standard for transactional state. If you are building a 'Notion-like' application where users are constantly editing rows, adding tags, and expecting those changes to persist across sessions, SQLite is your best friend. With the introduction of the Origin Private File System (OPFS), SQLite finally has a high-performance persistence layer that bypasses the sluggishness of IndexedDB. As noted by PowerSync, the VFS layer in SQLite Wasm now allows for near-native I/O, making it the definitive choice for local-first persistence.

DuckDB-Wasm: The Analytical Powerhouse (OLAP)

But what if your app needs to crunch numbers? If you're asking 'What was the average order value for users in Berlin over the last six months?', SQLite will struggle as your dataset grows. This is where DuckDB-Wasm shines. Released in its 1.0 stable version in June 2024, DuckDB is a columnar engine. Instead of reading an entire row to find one value, it only scans the columns it needs. In recent benchmarks for 2025, DuckDB-Wasm completed complex analytical queries in 250ms that took SQLite Wasm over 4.5 seconds. That’s a 20x performance leap that changes what’s possible in a browser.

Building Zero-Backend Dashboards

The most radical application of this tech is the 'Zero-Backend' dashboard. Imagine hosting a 50GB dataset on a cheap object store like Cloudflare R2 or Amazon S3 as a Parquet file. Historically, you'd need a beefy Python or Go backend to query that file. With DuckDB-Wasm, the browser uses HTTP Range Requests to 'stream' only the specific bytes it needs from that 50GB file. The client-side data processing happens entirely in a Web Worker, leaving the UI thread buttery smooth.

This isn't just a cost saver; it's a privacy and speed win. No data leaves the user's machine during analysis, and there's no cold-start latency for a serverless function to wake up. You are effectively shipping a mini-BigQuery instance directly to the user's browser.

The Multi-Megabyte Elephant in the Room

Critics often point to bundle size as the dealbreaker. Shipping 2.5MB of Brotli-compressed WASM and 68KB of JavaScript for DuckDB-Wasm feels 'heavy' in an era of micro-libraries. However, we need to reframe the cost. If you ship a 2.5MB engine that eliminates 10 subsequent 500KB JSON API calls and provides instant interactivity, you've actually improved the user experience. The initial 'weight' is an investment that pays dividends in every click thereafter.

There are, of course, technical hurdles. Because these engines use 32-bit Wasm builds, there is a hard 4GB memory limit. While DuckDB can query files much larger than 4GB by streaming them, it cannot hold a 10GB intermediate join table in memory. Furthermore, to get multi-threaded performance, you must serve your site with specific security headers (COOP and COEP) to enable SharedArrayBuffer. It’s a small price to pay for what is essentially a supercomputer in a tab.

Hybrid Architectures: The Best of Both Worlds

The most sophisticated frontend architects aren't choosing one; they are using both. They use SQLite Wasm for the 'source of truth'—user settings, draft posts, and local logs—leveraging its robust persistence via OPFS. Then, they use DuckDB-Wasm as an ephemeral engine to run high-speed analytics over that same data by exporting it to Apache Arrow format, which both engines can understand with zero-copy overhead.

Implementation Checklist

- Persistence: Use SQLite with OPFS if you need data to survive a page refresh without a backend.

- Analytics: Use DuckDB for filtering, grouping, and aggregating datasets over 100k rows.

- Security: Set Cross-Origin-Opener-Policy: same-origin and Cross-Origin-Embedder-Policy: require-corp to unlock multi-threading.

- Format: Store your large datasets in Parquet to leverage DuckDB’s columnar efficiency.

The Future is Client-Side

We are moving toward a world where the backend is just a dumb pipe for raw files and the frontend is where the 'intelligence' lives. When you compare DuckDB-Wasm vs SQLite Wasm, you aren't just comparing two libraries; you're choosing an architectural philosophy. Do you want to keep paying for expensive cloud compute to aggregate data, or do you want to harness the idle CPU cycles on your user's MacBook Pro?

The era of the heavyweight, backend-dependent dashboard is over. It's time to start building applications that are as fast as the hardware they run on. Are you ready to delete your aggregation APIs and let the browser do the heavy lifting?